This is a DevOps series. It covers containerizing a microservices e-commerce application, pushing images to AWS ECR, deploying to an EKS cluster, managing configuration with Helm, automating the pipeline with GitHub Actions, and wiring up observability with CloudWatch.

To understand the deployment, the application has to make sense first: the services, how they communicate, why each one has its own database. That context matters when writing Kubernetes manifests, configuring health checks, or debugging a failing pod.

So this series starts with the application, then moves into the infrastructure.

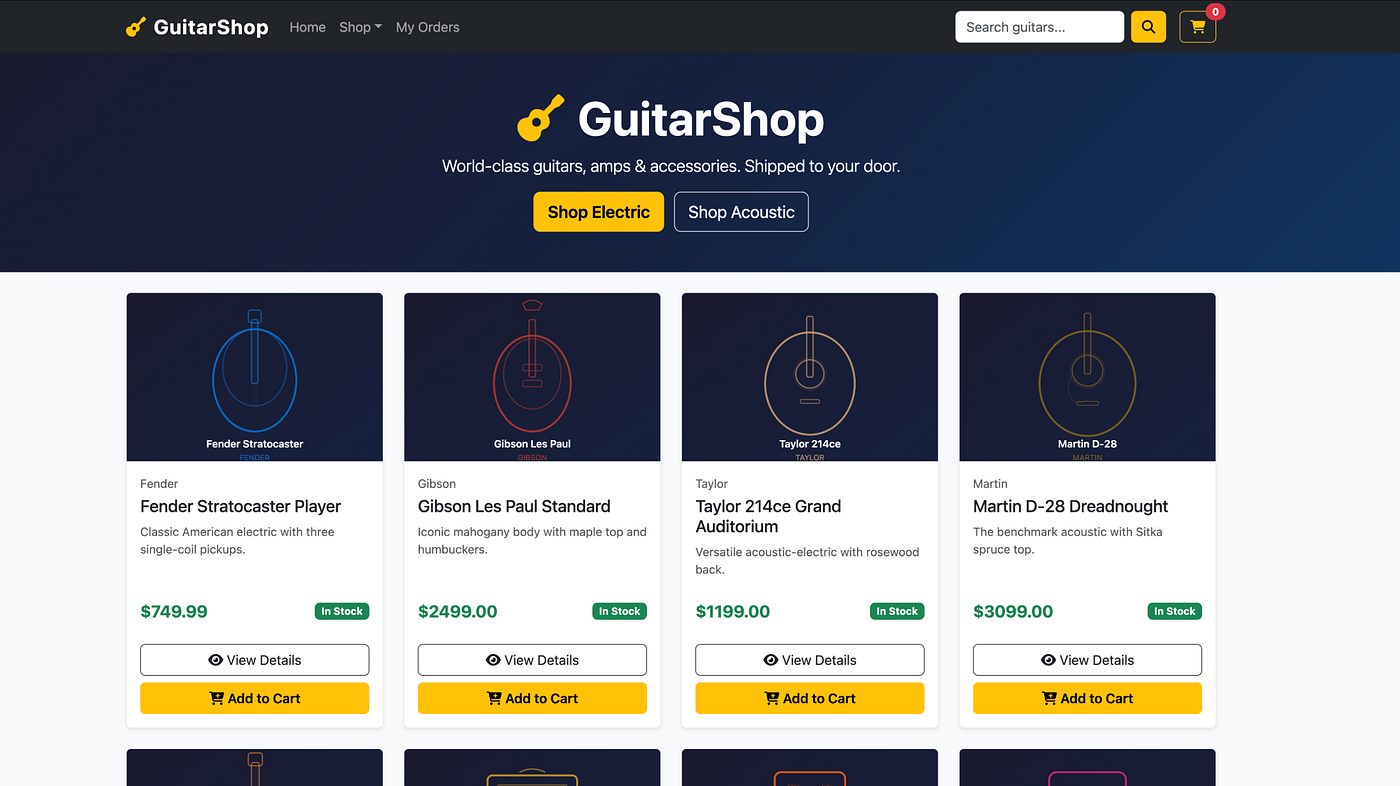

I. The Application: GuitarShop

GuitarShop is a fully functional e-commerce store for guitars, amps, and accessories. Users can browse a catalog, add items to a cart, place an order, and review their order history.

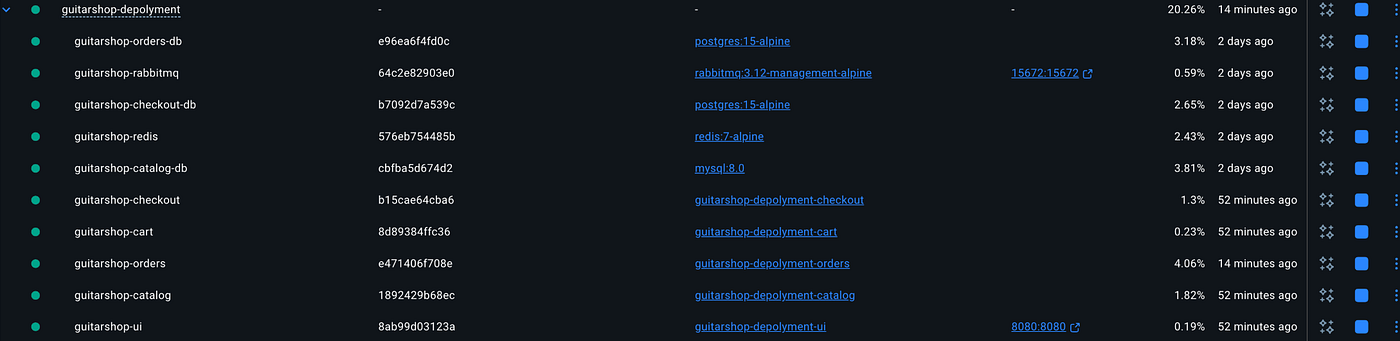

The system is built as five independent services rather than a monolith. Each service has its own codebase, its own database, and its own container. Services communicate using HTTP and RabbitMQ.

Application Services UI Service → Java 17 + Spring Boot + Thymeleaf Catalog Service → Go 1.21 Cart Service → Java 17 + Spring Boot Checkout Service → Node.js 18 + Express Orders Service → Java 17 + Spring Boot Databases / Messaging Catalog DB → MySQL 8 Cart DB → Redis 7 Checkout DB → PostgreSQL 15 Orders DB → PostgreSQL 15 Message Broker → RabbitMQ 3.12

Ten containers run together to support the application. Four different languages and four different databases are used, each selected based on the needs of the service rather than convenience.

II. Why This Architecture Matters for DevOps

A monolith is one thing to deploy. A microservices system is many services deployed together. That changes how Dockerfiles are written, how Kubernetes manifests are organized, how configuration is managed, and how failures are investigated across services.

Each architectural decision in GuitarShop directly impacts the infrastructure layer:

- Different languages: different Dockerfiles, base images, and build pipelines.

- Database per service: separate deployments, secrets, and storage.

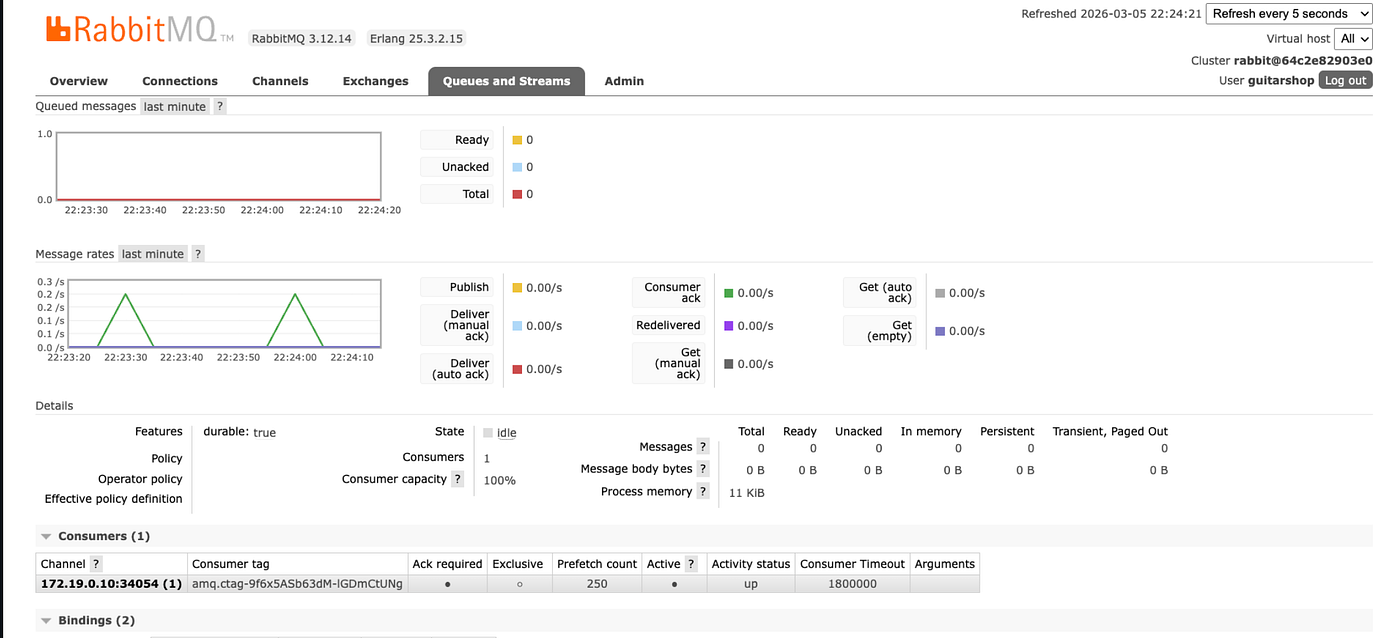

- RabbitMQ messaging: asynchronous communication between services.

- UI gateway: a single external entry point for the system.

Understanding the application structure makes the infrastructure decisions easier to understand.

III. How the Request Flow Works

The browser interacts with the UI Service on port 8080. The UI service then routes each request to the appropriate backend service and assembles the response returned to the user.

When an order is placed, the Checkout service writes the order to its database and publishes an ORDER_CREATED event to RabbitMQ. A confirmation is returned immediately.

The Orders service consumes that event asynchronously and stores the order in its own database. Because this happens in the background, the checkout request is not delayed.

IV. The Series

- Project Overview

- Polyglot Persistence: why each service uses a different database

- Containerizing Polyglot Services: Dockerfiles across Go, Java, and Node.js

- Docker Compose: running the full stack locally

- Deploying to AWS EKS: ECR, cluster setup, and Kubernetes manifests

- Helm: managing configuration and deploying with charts

- CI/CD: GitHub Actions pipeline from push to production

- Observability: CloudWatch logging and monitoring

V. Run It Locally

git clone https://github.com/Hepher114/guitar-shop-microservices.git cd guitar-shop-microservices docker compose up --build

- Storefront: http://localhost:8080

- RabbitMQ UI: http://localhost:15672 —

guitarshop/guitarshop123

One command starts all containers in the correct order. Health checks ensure that each service waits for its dependencies before starting. The local environment mirrors the distributed system that will later run in Kubernetes.

Conclusion

Deploying microservices is not only about containers, Kubernetes manifests, or CI/CD pipelines. It begins with understanding the system itself: the services, the communication patterns, and the data boundaries between them.

In the next part of the series we will explore one of the most important design decisions behind this architecture: why each service owns its own database and how polyglot persistence supports the system.